How One Insights Professional Analyzes Feedback Across 60-70 Music Events

60-70 events

supported across a European live-events portfolio

15 countries

approximate spread of event markets and languages

5,000-10,000 responses

open-text scale that used to require manual workarounds

1

At a glance

Company

A first insights hire needed faster, more flexible analysis across varied surveys, languages, file formats, and event teams.

Industry

Festival and live events insights

Operational context

A leading live-events portfolio needed a faster way to analyze feedback across a large spread of music festivals and events. Luiza joined as the first insights hire, building insights as a function while supporting internal teams in an industry where research is not always embedded from the start.

The challenge was not just reporting results. It was bringing order to survey data from 60-70 events, across different countries, languages, formats, and levels of research maturity. Some events had established agency-led research processes. Others had informal surveys, inconsistent structures, or no historical research at all.

AddMaple gave Luiza a faster way to turn raw survey data into patterns, comparisons, and story-ready outputs without losing the context that makes festival feedback useful.

2

The challenge: one insights role, many festivals

Insights is not handled by a large central team. It sits within one role that helps create the research function, supports event teams, and makes sense of results across a broad portfolio of shows.

Before the process was harmonized, every show could be doing its own thing. That was manageable at the scale of five or ten events, but became difficult across 60-70 events. Add in international surveys, CSV and SPSS files, different languages, and inconsistent headers, and even understanding what each file contained could become a project in itself.

The real problem was not whether the data existed. It was how quickly it could be made usable, comparable, and specific enough to help each event team decide what to improve next.

When you have 60, 70 events, it becomes really complicated because you're always having to sit back and think: okay, I'm talking about this show, and this is the kind of stuff that they want to know.

3

The turning point

The search for a new tool did not begin as a broad transformation project. It began with a very immediate need: finding an easy way to convert an SPSS file to CSV.

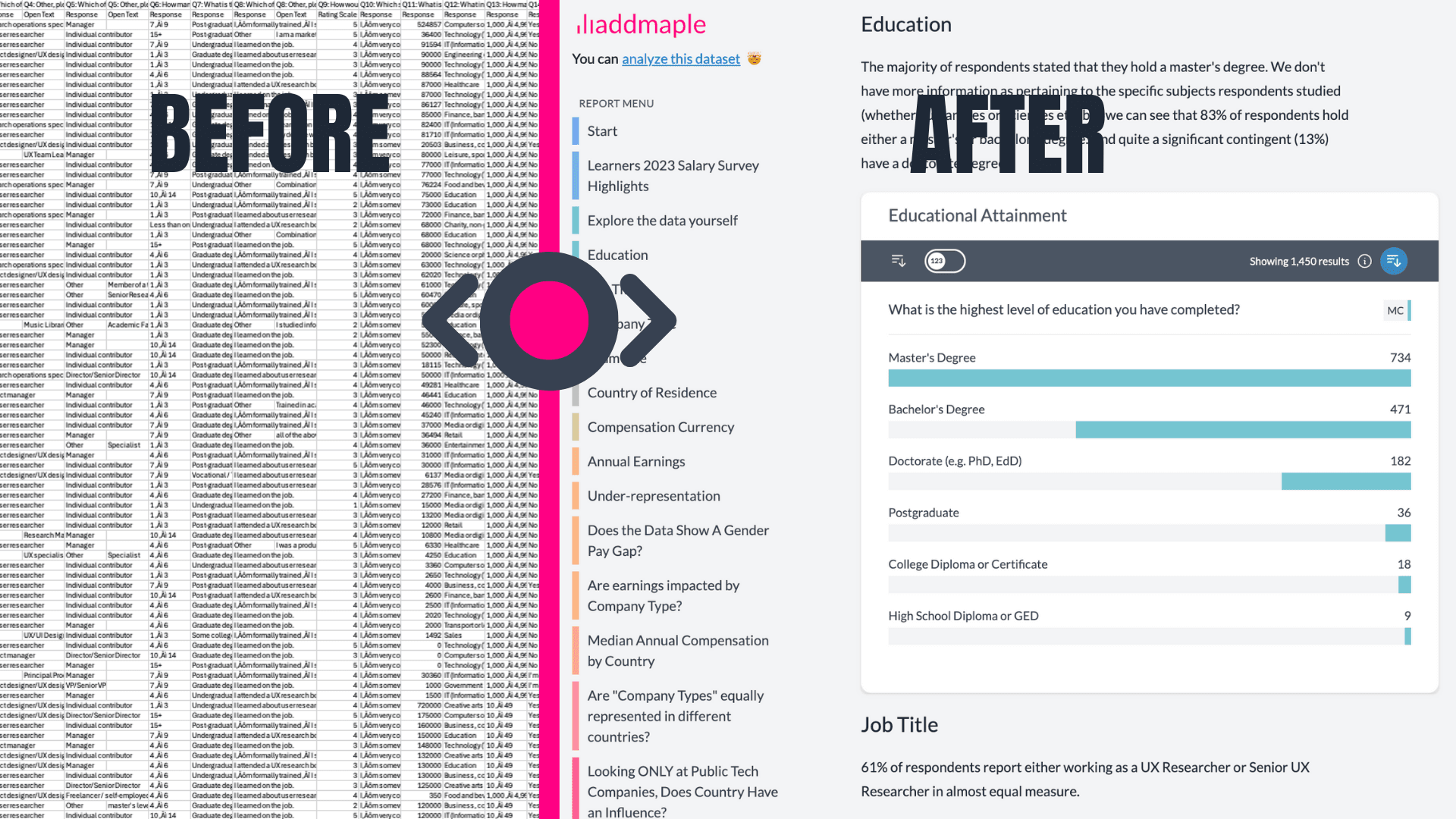

That small file-format problem exposed a much larger opportunity. Once Luiza saw that AddMaple could also analyze open-ended feedback, the platform became useful for the harder part of the workflow: understanding thousands of free-text responses without manually translating, keyword searching, and copy-pasting chunks into separate tools.

The value was immediate: file flexibility, faster exploration, easier text coding, and a more guided path through messy multilingual data.

I just wanted an easy way to convert an SPSS file to CSV... and then, when I saw that you could do the analysis of this open-ended data, it just blew my mind.

4

The AddMaple workflow

AddMaple gave Luiza a single place to explore both the structured and unstructured parts of the survey.

Instead of manually hunting for patterns, Luiza could use dashboards and related statistics to explore which parts of the event experience were most closely associated with happier or unhappier customers. That is especially useful in festival feedback workflows, where overall satisfaction needs to be connected back to operational details like queues, security, food, pricing, toilets, crowd flow, and the wider experience around the music.

The same workflow also made it easier to move from scores into open-ended explanations. Scores alone can be dry. AddMaple helped quantify the topics appearing in written feedback, surface the strongest themes, and keep the consumer voice close through verbatim quotes.

Rapid report-ready exploration across large festival datasets.

5

From top-line scores to clear priorities

For Luiza, analysis is not just about knowing whether food, prices, or toilets scored well. It is about benchmarking those results against similar events, then using regression and related statistics to decide which three or four priorities will matter most for the next show.

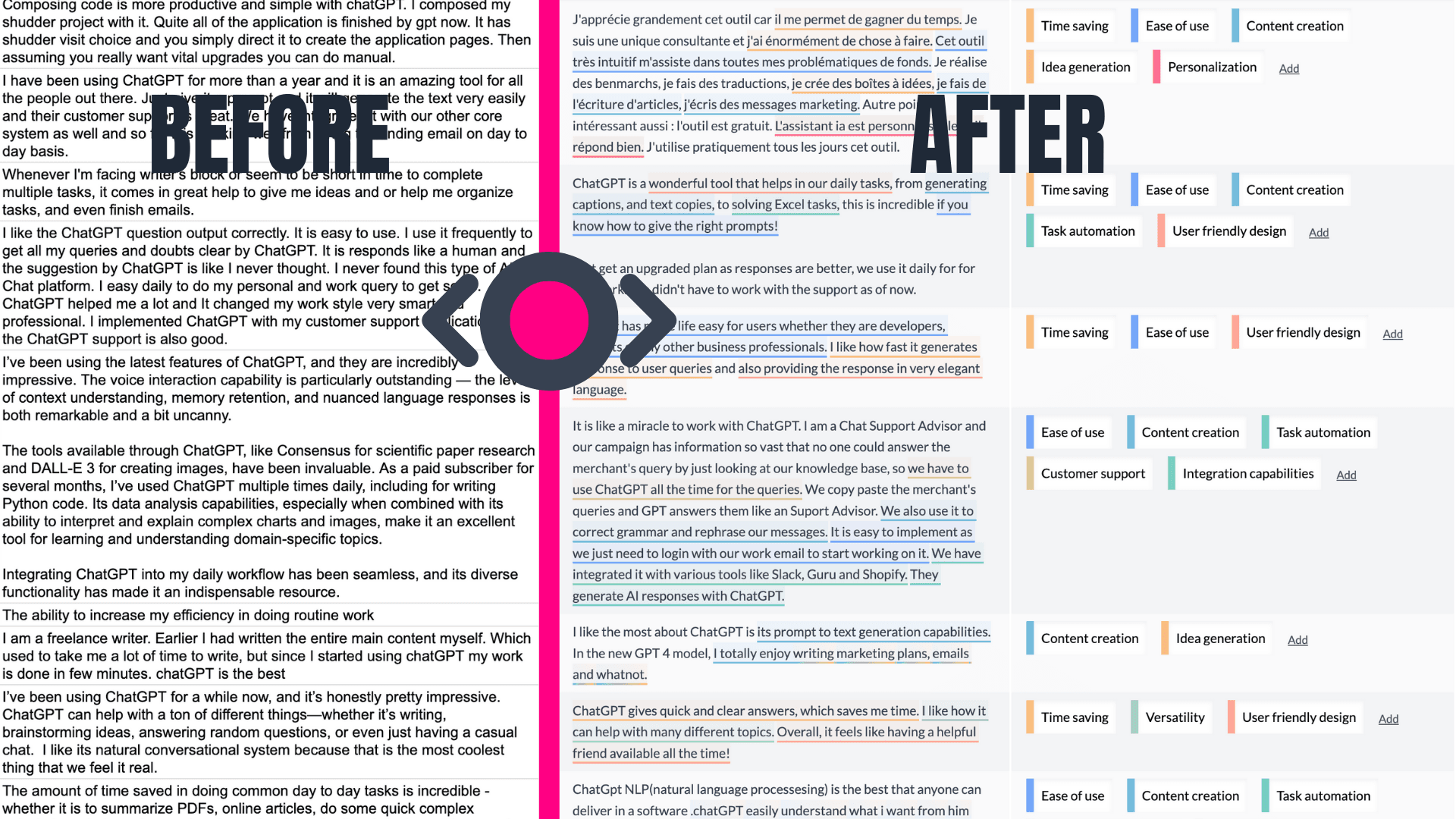

AddMaple supports that process by combining automated heavy lifting with researcher control. The AI copilot can suggest the most important themes, while Luiza can merge similar topics, add specific issues raised by event teams, and check the tagging answer by answer.

The result is a faster route from broad survey results to focused recommendations, with enough transparency for Luiza to feel confident in the analysis she shares with stakeholders.

I treat it almost as a co-pilot... the bulk of this heavy lifting is done by AddMaple, which is super, super time saving for me.

Text coding and theme exploration across multilingual festival feedback.

6

Making multilingual feedback usable

Multilingual analysis is central to this workflow. The portfolio spans roughly 15 countries, and international visitors often respond in the language where they feel most comfortable. That means English, Dutch, German, Hungarian, French, Nordic languages, and more can all appear across the same research workflow.

Before AddMaple, that meant translating headers, manually translating keywords, and searching for words like "queues" or "toilets" in each language. That approach could miss slang, nuance, or responses written in an unexpected language.

With AddMaple, Luiza can analyze the original written feedback more directly. The platform can detect themes by meaning rather than relying on exact word matches, which is especially useful when respondents use slang or describe an issue without using the expected keyword. That helps more feedback make it into the analysis, even when it arrives in different languages or formats.

If you feel more comfortable sharing feedback in your native language, please do so. So it's really nice to be able to take that feedback and give it the importance that perhaps you would have to compromise a bit in the past.

7

Turning unaided awareness questions, like artist mentions, into usable data

Festival surveys often include a deceptively difficult question: which artists do fans want to see next time? People love answering it, but analysis can be painful. Luiza remembered one festival where Billie Eilish appeared in 12 or 13 different spellings.

Previously, cleaning that kind of feedback meant manually coding names, interpreting misspellings, and extracting artist mentions from longer comments. With AddMaple, Luiza can treat the question like open text or convert it into a multiple-choice-style variable, quickly surfacing the most requested artists without months of cleanup.

That changes the role of the question in the research program. Instead of treating artist requests as a slow end-of-season cleanup task, Luiza can use them as a repeatable input into event planning throughout the year. For her team, that means more speed, more certainty, and a clearer view of what audiences want next.

There were, I think, 12 or 13 different ways in which people wrote Billie Eilish... now we have speed to analyze it. It's not going to take us months.